Overview

What if you could tell a robot "go to the kitchen" and it just works? This project connects large language models directly to a ROS2 navigation stack, enabling natural language control of a simulated mobile robot. The LLM acts as an autonomous agent - it interprets commands, queries its knowledge base for coordinates, checks the robot's current position, and orchestrates navigation without any hardcoded command parsing.

Diagrams

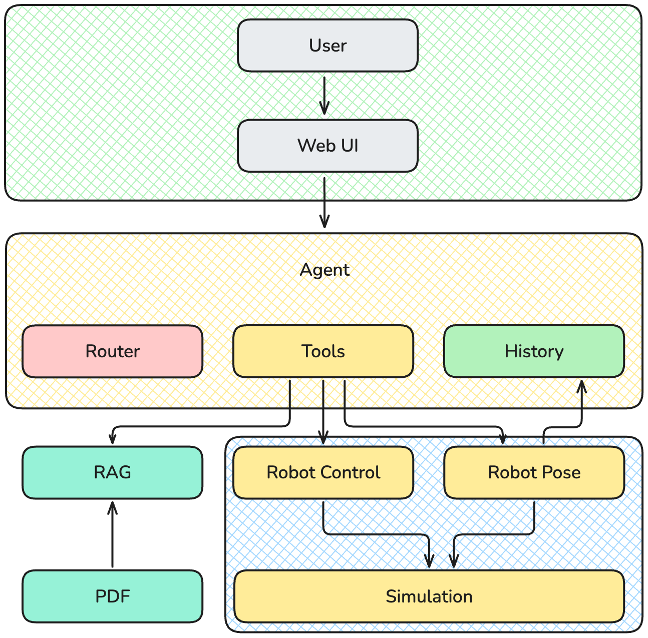

Architecture

The system is split into two containers: one runs the full Nav2 stack with Gazebo, the other runs the LLM agent. They communicate over ROS2 DDS with shared host network and IPC namespace - this allows the chatbot to discover and interact with Nav2 topics and actions as if running on the same machine.

The key challenge: ROS2 requires a spinning executor to process callbacks, but the LLM agent is async and can't block. Solution: a dedicated background thread runs the ROS2 executor continuously, while the main thread handles LLM inference. Tool calls access a shared node instance that's always ready to send goals or read cached pose data.

The LLM interface uses OpenRouter as the backend, allowing hot-swapping between any available model without code changes. The chat UI is built with Chainlit, providing WebSocket-based streaming and conversation management out of the box.

Agentic Reasoning

The LLM doesn't just execute single commands - it reasons through multi-step tasks. When you say "go to the kitchen", the agent autonomously: 1) queries RAG for the kitchen's coordinates, 2) checks current robot pose to see if it's already there, 3) sends a navigation goal only if needed.

This is achieved through a recursive tool loop - tool results are fed back to the model, which decides what to do next until the task is complete. The model handles edge cases naturally: if RAG returns no coordinates, it tells the user; if the robot is already at the target, it skips navigation.

ROS2 Tools

Navigation goals are sent asynchronously via ActionClient to avoid blocking the LLM response loop. Pose queries use TRANSIENT_LOCAL QoS to receive the last published AMCL pose immediately on subscription.

RAG Pipeline

Documents are processed through a Retrieval-Augmented Generation pipeline for semantic search over project documentation and location coordinates.